Introduction

In this post, I am briefly writing up about what I did in my PhD research at Heriot-Watt University and the main idea behind the thesis. This post was initially published in March 2019. In January 2022, I have updated this post and provided some links to my research contributions.

The Team

From 2013 to 2018, I was working on my PhD project at the Department of Computer Science, School of Mathematical and Computer Sciences, Heriot-Watt University (Scotland) under supervision of Nick Taylor. The research idea was coined while I was working at the Technical University of Delft, and started from the publication “A User Modeling Oriented Analysis of Cultural Backgrounds in Microblogging,” which received the best paper award at the ASE International Conference on Social Informatics in Washington D.C. US on 14 December 2012 (slideshare).

The topic is at the intersection of social networking, communication, and Artificial Intelligence. The research resulted in several publications and a PhD thesis: “Mining Microblogs for Culture-awareness in Web Adaptation.”

Nothing of it would be possible without the love and support of my family and friends, and the best research supervisors in the World who gave me much advice and a lot of thinking about the project, research process, and life.

Many Thanks to my Supervisors, professors Nick Taylor and Yanguo Jing, Friends, and Family!

My PhD Supervisor Gives me "Thumbs Up".

My PhD Graduation Congregation at Heriot-Watt University. With Love from Scotland (left). VC. Chancellor greets new PhD graduates (right).

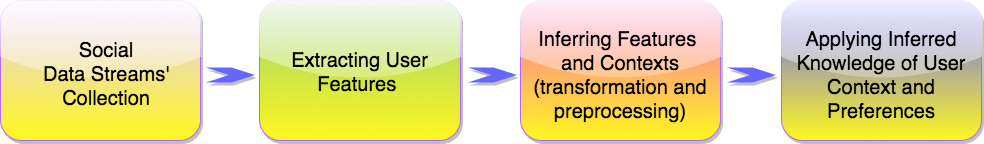

Meeting cultural web user needs

Prior studies in sociology and human-computer interaction indicate that persons from different countries and cultural origins tend to have their preferences in real-life communication and the usage of web and social media applications. For adapting web applications to personal cultural needs, we might ask persons to share their locations and provide relevant design and content. There are also automatic means for getting approximate user locations based on the IP address and web client information. This functionality is often inaccurate (consider traveling persons or expatriates) or unavailable. Social media seems to provide much information on user traits, which can be further used to adapt applications to personal needs.

I have suggested inferring user cultural origins based on microblogging patterns reflecting social media features. For instance, persons microblogging from The Netherlands and Germany are more likely to share links, while persons from Japan reply the most. However, this is one of the cases how we can infer user origins. There are more cues shared on social media that can reveal user origins. I have experimented with several methods of finding user origins based on Twitter data. Please read on to find out “how.”

Why is this important? The location data is not always at hand. If we look at the Twitter data, only a tiny percent of users share their geographic locations. In our experiments, only two percent of users revealed their geographic areas. Most of our users had open profiles without attaching any geographic locations.

Detecting user location and inferring cultural origins

Moreover, user location often does not match user origins. Many people travel, live abroad, and often do not match their cultural stereotypes. However, this work is against any kind of stereotyping and profiling. Instead, it demonstrates the application of machine learning tools for inferring cultural origins, which might differ from the expected result or from the location provided by the user software agent. Anyway, the inferred origins might be a close enough approximation for providing a state-of-the-art user experience. It is essential to mention that individual users should be aware of how easily their personal characteristics such as location can be mined out of their social data.

What is the accuracy of inferring cultural origins?

My PhD thesis findings reveal statistically significant differences in Twitter feature usage regarding users’ geographic locations. These differences in microblogger behavior and user language defined in user profiles enabled us to infer user country origins with more than 90% accuracy. Other user-origin predictive solutions proposed in the thesis do not require other data sources and human involvement to train the models, enabling the high accuracy of user country inference when exploiting information extracted from a user followers’ network or data derived from Twitter profiles.

Country inference for Twitter messages

I have shared my country inference model for Twitter messages on GitHub; see save_tweets. It is created in Python and demonstrates an easy solution for inferring user countries on Twitter. For more details and privacy implications, see the related paper: Daehnhardt, E., Jing, Y., & Taylor, N. 2014. Cultural and geolocation aspects of communication in Twitter. In ASE International Conference on Social Informatics 2014 at ResearchGate

Applications

We analyzed communication and privacy preferences with origin predictive models and built a culture-aware recommender system. Our analysis of friend responses shows that Twitter users tend to communicate primarily within their cultural regions. Usage of privacy settings showed that privacy perceptions differ across cultures. Finally, we created and evaluated movie recommendation strategies considering user cultural groups and addressed a cold-start scenario with a new user. We believe that the findings discussed give insights into the sociological and web research, particularly on cultural differences in online communication.

How was it all done?

I used several computer tools to code my experiments and statistical hypothesis testing. Writing scripts and prototype required some knowledge of software engineering, particularly running long jobs while collecting bid data, data wrangling with Pyton Pandas (social media is generally “dirty” data which requires cleaning), MySQL and Redis for data storage, Bash scripting for automatic routines such as dataset archiving, Celery for running asynchronous tasks amongst other tools. Even though I initially intended to use Java, I quickly changed to Python and never looked back. Python has everything needed for running statistical tests, rapidly applying machine learning algorithms such as provided by scikit-learn, or quire recent developments of scientific community such as Factorisation Machines by Rendle, and also very recent TensorFlow.

Needless to say, this kind of prototyping requires an excellent level of agility and outlook into the current developments in industry and research. Unit testing and Git for version control were very much needed. The Jupiter Notebook is another excellent tool that helped me run my code tests, update my performance graphs, include some tables and LaTex formula, descriptions, and present it in an interactive form locally and on GitHub.

Not every test, however, was initially coded. Microsoft Azure platform provided a great set of machine learning tools, which assisted me in rapid tests of initial ideas. Their generous gift of server resources was beneficial in the very critical moment when the project could get into the scope creep. From my experience, rapid tests and prototyping are excellent means for checking hypotheses and pilot tests. Especially in research, we have a set of hypotheses to be proven. However, most of the hypotheses are challenging to know beforehand. For instance, would be factoring in user cultural origins be beneficial for movie recommendations? Movie recommendation tests in Microsoft Azure showed me that not all recommendation algorithms could benefit from the inferred user origins. The recommendation performance was further a cornerstone of my thesis chapter, “Culture-aware Social Recommenders.”

My Skills Update

Overall, I have learned about social media, machine learning, programming patterns, worked with different data structures, updated my knowledge of statistics, published papers, and presented at international conferences. In short, the primary technical skills acquired or updated during the five years of this project were:

-

Python programming language for coding the experiments, data collecting, analysis, and visualising. Python is not only fully equipped with Artificial Intelligence tools, but it is also straightforward to learn.

-

Microsoft Azure Machine learning platform, scikit-learn, Factor Machines, Random Forest, Regression models, and other tools.

-

Python Pandas and stats packages helped brush up my datasets and their statical analysis. I could not do without t-tests, Welsh tests, and ANOVA.

-

Agile software development and maintenance were corner stores of the project. Many researchers in academy or industry benefit from the tools such as GIT for version control and Docker assisting in on-the-fly portable development. To compliment my agile approach with automatic builds and scalability, I am learning now Jenkins. I am going to share my experience in one of the following posts.

-

Running cron jobs, maintaining and cleaning my databases in MySQL and Redis storage, and reading related papers took most of my free time. Additionally, I have learned how to control system resources such as memory consumption and CPU load.

-

Since the recommender experiments required that I periodically retrain my movie rating prediction model, Celery helped me run training scripts.

-

I would say that basic human skills such as talking or writing are technical. However, we follow certain practices when presenting the research results at conferences or workshops. We need to follow the presentation structure and be ready for feedback. Sometimes the critical feedback is the most useful. It is, however, essential to not take things personally but learn from them :)

I have found that an unprecedented volume of the available tools can make research and development more accessible. However, this often adds overhead when thinking about the system architecture. How could we select the tools which play well together? In my opinion, community support and well-documented software are essential when deciding on the application tools.

Related Publications

- Daehnhardt, E.A., 2018. Mining microblogs for culture-awareness in web adaptation (Doctoral dissertation, Heriot-Watt University).

- Daehnhardt, E., Taylor, N.K. and Jing, Y., 2015, October. Usage and consequences of privacy settings in microblogs. In 2015 IEEE International Conference on Computer and Information Technology; Ubiquitous Computing and Communications; Dependable, Autonomic and Secure Computing; Pervasive Intelligence and Computing (pp. 667-674). IEEE.

- Daehnhardt, E., Jing, Y. and Taylor, N., 2014, December. Cultural and geolocation aspects of communication in twitter. In ASE Internation Conference on Social Informatics (Vol. 2014).

- Ilina, E., A User Modeling Oriented Analysis of Cultural Backgrounds in Microblogging. Social Informatics Conference 2012

- Ilina, E., Abel, F. and Houben, G.J., 2012. Mining twitter for cultural patterns. Mensch & Computer 2012–Workshopband: interaktiv informiert–allgegenwärtig und allumfassend!?.

You can find these articles at Google Scholar.

Conclusion

My research advances our understanding of microblogging regarding cultural differences with Twitter data and statistical and machine learning tools. It demonstrates possible solutions for inferring and exploiting cultural origins for building adaptive web applications. Big data available on the Social Web makes it possible to better understand user needs and create state-of-the-art applications tailored to user cultural or personal needs. Many open-source and commercial tools assist in and drive the research in Artificial Intelligence applications. However, it is crucial to be aware that human privacy and security have become even more fragile with automated solutions. Personal data management should be in the hands of its owners.

Did you like this post? Please let me know if you have any comments or suggestions.

Posts about Machine Learning that might be interesting for you