Introduction

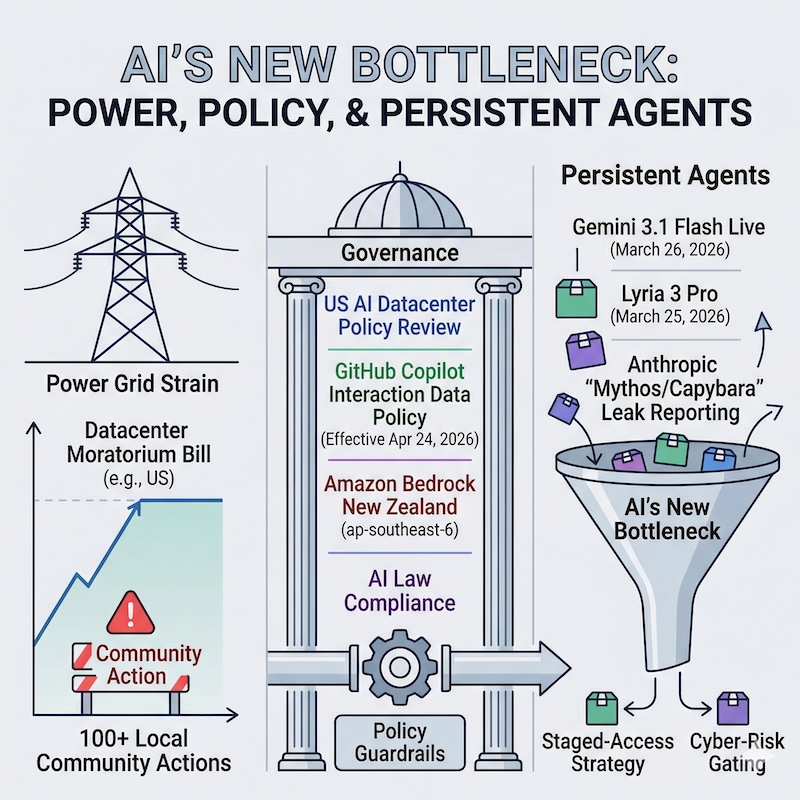

This week felt like two very different AI stories happening at the same time.

On one track, we got concrete, practical model releases — real-time voice and AI-generated music from Google. On the other, the constraints became more visible: energy and infrastructure pressure, data-privacy defaults, and a high-capability model leak that showed just how carefully labs are thinking about staged rollouts.

I find this fascinating. For a long time, the only question that seemed to matter was: how capable is the model? Now, three equally important questions run alongside it: Can we power it? Are we allowed to deploy it? And who gets access first?

This week illustrated all three constraints at once. Let me walk you through what happened.

What happened this week

- U.S. lawmakers proposed a federal pause on new AI datacenter construction.

- GitHub changed how it uses Copilot interaction data for Free, Pro, and Pro+ users.

- AWS made Amazon Bedrock available in New Zealand for the first time.

- Google launched Gemini 3.1 Flash Live, a low-latency real-time multimodal model.

- Google launched Lyria 3 Pro, an extended music generation model, in public preview.

- Details about Anthropic’s unreleased Mythos/Capybara model leaked, and Anthropic confirmed it exists.

Infrastructure and Governance

1. U.S. lawmakers proposed a federal pause on new AI datacenters

Lawmakers introduce bill to pause building of new datacenters - The Guardian

On March 25, 2026, a group of U.S. lawmakers introduced the Artificial Intelligence Data Center Moratorium Act. If passed, it would immediately halt all new AI datacenter construction in the U.S. — and block upgrades to existing facilities — until Congress passes comprehensive federal AI legislation covering worker protections, civil rights, environmental safeguards, and mandatory pre-release government review of AI products.

The bill faces steep odds in the current Congress, and critics on both sides of the aisle have pushed back on it. What makes it worth paying attention to is not whether it passes — it probably will not. What matters is the pressure it reflects.

More than 100 communities across 12 states have already enacted local datacenter moratoriums. That is a real grassroots movement that predates this bill, and it is the more durable signal regardless of what happens federally. The bill also proposes banning U.S. exports of AI computing hardware to countries without equivalent safeguards — a provision with significant implications for global AI supply chain strategy.

Takeaway: Grid-scale AI expansion is now a live federal policy debate, not just a local permitting headache.

Why this matters to you

Even a bill that never passes creates uncertainty. Uncertainty raises financing risk for new infrastructure builds. Utilities, planners, and infrastructure investors are already factoring this in. If you are building or evaluating multi-region AI deployments, it is worth watching how this pressure evolves — it is already influencing where companies choose to place compute.

2. GitHub changed Copilot data training defaults for Free, Pro, and Pro+ users

Updates to GitHub Copilot interaction data usage policy - GitHub Blog

On March 25, GitHub announced that starting April 24, 2026, interaction data from Copilot Free, Pro, and Pro+ plans may be used to train AI models — unless you opt out. This data includes everything you type into Copilot: prompts, suggestions you accept or reject, code snippets, and surrounding context.

GitHub Business and Enterprise plans are not affected. Students and teachers are exempt. If you had previously opted out, your preference carries over automatically.

Action item: If you are on Copilot Free, Pro, or Pro+, go to github.com/settings/copilot/features → Privacy → and disable “Allow GitHub to use my data for AI model training” before April 24.

Takeaway: The default data-training posture for a very large group of developers is changing. Opt-in is becoming opt-out.

Why this matters to you

There is an important asymmetry here. Business and Enterprise customers are protected by contract. Individual developers on free and lower-tier plans are protected only by a setting they must discover and manually disable. This kind of default shift — where data collection is “on” unless you know to turn it off — is becoming a common pattern across AI products. It is worth reviewing your settings regularly, not just for GitHub, but across any AI coding tool you use.

3. Amazon Bedrock is now available in New Zealand

Run Generative AI inference with Amazon Bedrock in Asia Pacific (New Zealand) - AWS

On March 26, AWS launched Amazon Bedrock in its new Auckland region (ap-southeast-6). Requests can be routed across Auckland, Sydney, and Melbourne, or globally where permitted.

Takeaway: Stable, smaller jurisdictions with clear data-residency laws are becoming genuine strategic options for production AI inference — not just latency optimisations.

Why this matters to you

New Zealand is an interesting case. It has a predictable data-protection law, strong geopolitical alignment with Five Eyes partners (Australia, Canada, the UK, and the U.S.), no domestic AI-specific regulatory risk, and strong enterprise buying comfort in regulated industries like finance and healthcare.

With U.S. datacenter politics becoming noisier, teams designing multi-region AI architectures are increasingly treating regional placement as risk management, not just latency tuning. New Zealand — with cross-border routing to Sydney and Melbourne already built in — fits that frame well.

Model Releases and Safety Pressure

4. Google launched Gemini 3.1 Flash Live for real-time multimodal conversations

Gemini 3.1 Flash Live: Making audio AI more natural and reliable - Google

Gemini 3.1 Flash Live Preview - Gemini API docs

Google published Gemini 3.1 Flash Live on March 26, 2026. It is available in preview via the Gemini Live API and Google AI Studio.

This model handles real-time, bidirectional conversations, processing audio and video input together with low latency. What makes this technically interesting is the architecture: instead of the traditional pipeline of transcribe speech → reason → synthesise reply, the model does all three in a single native pass. This dramatically reduces latency and makes the conversation feel much more natural.

Key details:

- Benchmarks: 90.8% on ComplexFuncBench Audio (multi-step function calling in noisy environments) and 36.1% on Scale AI’s Audio MultiChallenge with “thinking” enabled — both leading scores at launch.

- Conversation continuity: Google says Gemini Live (the product experience) can follow a conversation thread for 2× longer than before. This is a product-level behavior claim, not a published raw API context-window specification for the model.

- Languages: 90+ languages supported for real-time multimodal conversations.

- Safety: All audio output is watermarked with SynthID — an imperceptible watermark embedded at generation time to help identify AI-generated audio.

- Enterprise deployments: Verizon and The Home Depot were cited as early production customers, both using it for contact centre applications.

- Also powers the global rollout of Search Live, now active in 200+ countries.

Takeaway: Native multimodal real-time voice, deployed in large enterprise products at launch, suggests voice interaction is consolidating into a primary layer for persistent AI agents.

Why this matters to you

The benchmark number worth watching is 90.8% on ComplexFuncBench Audio. This measures how well the model handles multi-step function calling — for example, a voice assistant that looks up your calendar, checks your email, and books a restaurant, all in a single spoken exchange in a noisy room. That capability makes voice useful for real agentic workflows, not just simple Q&A. If you are evaluating this for production use, that is the number to test against your own use cases.

5. Google launched Lyria 3 Pro for developers

Build with Lyria 3, our newest music generation model - Google

Lyria 3 Pro: Create longer tracks in more Google products - Google

Lyria 3 (the base model) launched in February 2026. On March 25, Google added Lyria 3 Pro — the extended-capability tier. Both are now in public preview for developers worldwide via the Gemini API and Google AI Studio.

The two tiers are designed for different use cases:

- Lyria 3 (base model): Fast and efficient, generates 30-second audio clips. Best for rapid prototyping, background loops, and social content.

- Lyria 3 Pro: Generates full tracks up to 3 minutes. The key difference is structural awareness — you can specify an intro, verse, chorus, bridge, and outro directly in your prompt, and the model understands song structure rather than producing one undifferentiated audio block.

Both tiers support tempo conditioning, time-aligned lyrics (the model can synchronize generated vocals to a beat), multimodal image-to-music input (describe or show an image and generate matching music), and realistic vocal generation across many languages and genres. All output is SynthID-watermarked.

Lyria 3 Pro is also available on Vertex AI for enterprise-scale audio generation, in Google Vids (rolling out to Workspace users the week of March 25), and through ProducerAI — a collaborative music production tool Google recently acquired.

Takeaway: Music generation is moving from standalone novelty to platform feature, subject to the same infrastructure and policy constraints as every other AI capability.

Why this matters to you

The pace of iteration here is striking: Lyria 2 launched in April 2025, Lyria 3 in February 2026, and Lyria 3 Pro just one month later in March 2026. This acceleration happens because the model quality bottleneck has been cleared — what remains is market readiness, which moves faster when distribution is already on a cloud platform. If you are building products that involve music, audio branding, or creative media, these APIs are now production-ready infrastructure rather than experimental tools.

6. Anthropic’s Mythos/Capybara model leaked — and Anthropic confirmed it is real

Details leak on Anthropic's "step-change" Mythos model - Techzine

Anthropic acknowledges testing new model after leak - Fortune (paywalled)

On March 26–27, Fortune and Techzine published details from internal Anthropic draft materials that became publicly accessible due to a content management system misconfiguration. The CMS defaulted all uploaded assets to public URLs unless manually changed — approximately 3,000 unpublished assets were exposed as a result. The leak was discovered by security researchers Roy Paz (LayerX Security) and Alexandre Pauwels (University of Cambridge), who notified Fortune. After being informed, Anthropic restricted access.

The leaked materials refer to two overlapping names for the same model: Mythos is the model name; Capybara is the tier name — a new fourth tier positioned above Opus in Anthropic’s existing Opus/Sonnet/Haiku hierarchy. The draft describes Capybara as “larger and more intelligent than our Opus models — which were, until now, our most powerful,” with scores “dramatically higher” than Claude Opus 4.6 on coding, academic reasoning, and cybersecurity benchmarks.

The cybersecurity dimension is the sharpest detail in the leak. The draft states the model is “currently far ahead of any other AI model in cyber capabilities” and warns that it “presages an upcoming wave of models that can exploit vulnerabilities in ways that far outpace the efforts of defenders.” Because of this, Anthropic’s rollout plan gives cyber defenders priority access first — the reasoning being that the organizations most likely to be targeted by adversaries using this capability should have a window to harden their systems before wider availability.

The leaked materials also disclosed a previously unreported incident: a Chinese state-sponsored group conducted a coordinated campaign using Claude Code to infiltrate approximately 30 organisations — including tech companies, financial institutions, and government agencies — before Anthropic detected and disrupted it.

In a statement to Fortune, an Anthropic spokesperson confirmed: “We’re developing a general-purpose model with meaningful advances in reasoning, coding, and cybersecurity. Given the strength of its capabilities, we’re being deliberate about how we release it. As is standard practice across the industry, we’re working with a small group of early access customers to test the model. We consider this model a step change and the most capable we’ve built to date.”

No release date has been announced.

Takeaway: The leak itself is less important than what it confirms: frontier labs are now making deliberate, sequenced deployment decisions based on documented threat models, not marketing windows.

Why this matters to you

Defender-first access is not a PR move — it is a practical safety strategy. It creates a window in which the organisations most likely to face AI-assisted attacks can prepare before adversaries have equivalent capabilities. Whether or not Mythos/Capybara ships broadly, the release logic it represents is likely to become standard practice for any model that scores highly on cybersecurity benchmarks. If you work in security, or if your organisation is a likely target, this kind of staged release timeline is something to build your threat modelling around — not just react to.

Closing Thoughts

What struck me most about this week is how much the conversation has shifted.

We used to talk almost exclusively about model capability — which model is smarter, which benchmark leads. That still matters. But the more durable questions are becoming: Can we power it? Where can we legally deploy it? And who gets access first, and why?

The answer to all three is becoming more complicated. Energy demand for AI is scaling faster than linearly, and it is now running directly into hard limits — grid capacity, permitting politics, and deliberate deployment gating shaped by safety concerns. Deployment capacity is becoming the real constraint, not model quality alone.

Teams that plan for both tracks — capability and the constraints on where and how it can ship — will move faster than those who focus on capability alone.

Did you find this useful? I would love to hear your thoughts. Let me know if you have comments or suggestions!