LLM

AI

Python

Life

Weekly

Apps

Git

chatGPT

LLM

Blogging

TensorFlow

ML

genAI

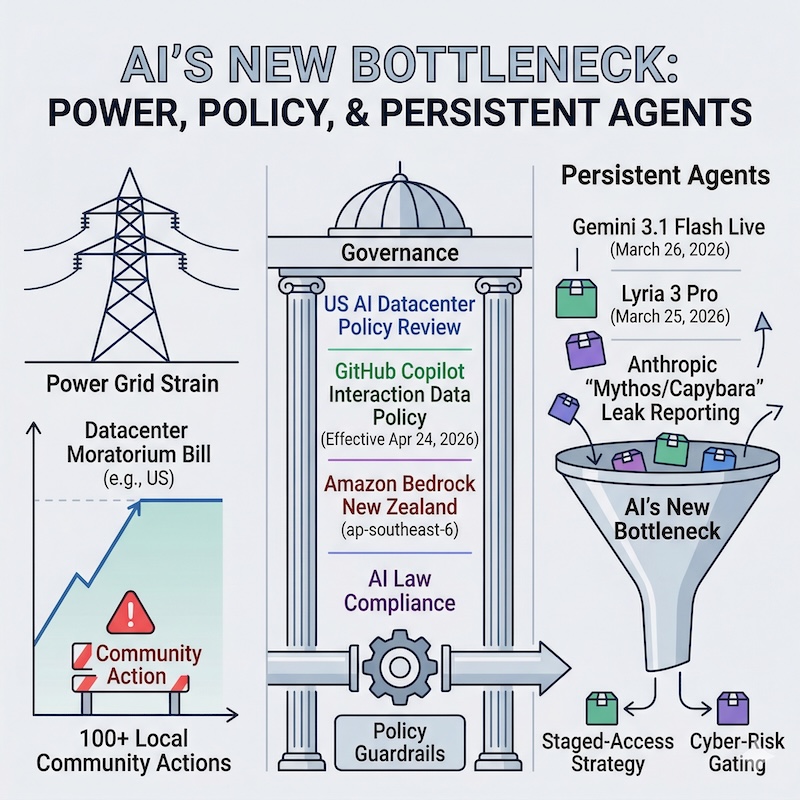

AI Law

DL

NLP

GenAI

MAC

Preprocessing

SEO

AI-Dev

AI News

AI Safety

Agentic

CLI

Ethics

Conda

History

News

PhD

Regression

Research

Series

Setup

AI Engineering

AI Regulation

AI-Safety

Antigravity

Automation

CNN

Classification

Cursor

Data

Development Tools

Edge AI

Fine Tuning

Food

GitHub

IDE

Kaggle

Mac

Mixed Precision

OS

RS

Robots

Study

Transfer Learning

ai-dev

research